In this article, I will explain several software testing metrics and KPIs, why we need them, and how we should use them. This article is based on my experiences and understanding. Also, I will use several quotes from various books and articles. They are listed in the references part of this article.

I want to start with metrics. Metrics can be very useful and harmful to your development and testing lifecycle. It depends on how to interpret and use them. In any kind of organization, people (managers, testers, developers, etc.) generally talk about metrics and how to do measurements correctly. Some of them use very dangerous metrics to assess team members’ performance; on the other hand, some use relevant, meaningful, and insightful metrics to improve their processes, efficiency, knowledge, productivity, communication, collaboration, and psychology. If you measure the correct metrics in the right way and transparently, they will guide you to understand the team’s progress towards certain goals and show the team’s successes and deficiencies.

In lean software development, we should focus on concise metrics that lead to our continuous improvement. In reference [1], there is a quotation from Implementing Lean Software Development: From Concept to Cash, by Mary and Tom Poppendieck[2007], stated that fundamental lean measurement is the time it takes to go “from concept to cash,” from a customer’s feature request to delivered software. They call this measurement “cycle time.” The focus is on the team’s ability to “repeatedly and reliably” deliver new business value. Then, the team tries to improve their process and reduce the cycle time continuously.

These metrics need a “whole team” approach because all the team members’ efforts enhance these metrics’ results. Time-based metrics are critical to enhancing our speed. We should ask ourselves some questions based on speed, latency, and velocity. One of the most used metrics in the agile world is Team Velocity. It shows us how many stories point (SP) the team tackles during a single sprint, which is one of Scrum’s fundamental key metrics. It is calculated at the end of each sprint by summing up the completed User Story points.

Lean development focuses on delighting end-users (customers), which should be the team’s goal. Thus, we need to measure also business metrics such as Return on Investment (ROI) and business value metrics. If the main business goal of the software is to reach and gain more customers, we need to deliver software that serves this purpose.

In another reference, Sarialioğlu states that “without any metrics and measures, software testing becomes a meaningless and unreal activity. Imagine, in any project, that you are unaware of the total effort you have expended and the total number of defects you found. Under these circumstances, how can you identify the weakest points and bottlenecks?” [2] This approach is more metric-based, and in this way, you should talk with the numbers such as:

- Total Test Effort and Test Efficiency (with schedule and cost variances)

- Test Execution Metrics (passed/failed/in progress/blocked etc.)

- Test Effectiveness (number of defects found in system/total number of defects found in all phases)

- Total number of Defects and their Priorities, Severities, and Root Causes (dev, test, UAT, stage, live)

- Defect Turnaround Time (Developer’s defect fixing time)

- Defect Rejection Ratio (Ratio of rejected/invalid/false/duplicate defects)

- Defect Reopen Ratio (Ratio of successfully fixed defects)

- Defect Density (per development day or line of code)

- Defect Detection Ratio (per day or testing effort)

- Test Coverage, Requirement Coverage, and so on…

In “Mobile Testing Tips” book [5], metrics importance is stated as follows: “without the knowledge you would obtain through proper test metrics, you would not know how good/bad your testing was and which part of the development life cycle was underperforming.” The whole team approach is also critical of the metrics you will measure and report. Some of the tips are listed below for the rest of them I suggest you read the book.

- Tell people why metrics are necessary.

- Explain each metric you gather to all team members and stakeholders, not just the test team.

- Make people believe in metrics.

- Try to be informative, gentle, and simple in your reports.

- Try to evaluate/monitor/measure processes and products rather than individuals.

- Try to report points in time and trend metrics.

- Try to add your comments and interpretation with your metrics.

- Try to be %100 objectives.

- Metrics should be 7X24 accessible.

Also, metrics are categorized into three sections in the book. These are test resources, test processes, and defects. Resources metrics are time, budget, people, effort, efficiency, etc. Process metrics are test case numbers, statuses, requirement coverages, etc. Defects metrics are the number of defects, defect statuses, defect rejection ratio, defects reopen ratio, defects root causes, defects platforms, defects types, etc. At last, the book states that metrics make the test process transparent, visible, countable, and controllable and, in a way, allow you to repair your weak areas and manage your team more effectively.

Some approaches, such as Rapid Software Testing (RST) – Bach & Bolton states that you need to use discussion rather than KPIs and objective metrics. Bach emphasized that you must gather relevant evidence through testing and other means. Then discuss that evidence. [3]

Also, Bach wrote in “Lessons Learned in Software Testing” [4] book at Lesson 65, “Never use the bug-tracking system to monitor programmers’ performance.” If you report a programmer’s huge number of defects, he gives his all to fix his bugs and tries to postpone all other tasks. Also, the other crucial mistake is to attack and embarrass a developer for his bugs. This will cause big problems for team collaboration and the whole team approach. The other developer team members also respond to this action very defensively, and they will start to argue about each bug and don’t accept most of them. They generally say, “This works in my machine,” “Have you tried it after clear the browser cache with CTRL+F5”, “This is a duplicate bug,” “It is not written in requirements,” and so on. Also, the worst thing is they may start to attack you on your testing methods, approaches, strategies, and skills. This causes a terrific mess in the team, big problems with team members’ communications, and reduces team efficiency.

And also, lesson 66 tells us, “Never use the bug-tracking system to monitor testers’ performance.” If you start to evaluate the testers with the number of bugs they found, they may start to behave not the intended way. They are starting to focus on easy bugs such as all kinds of cosmetic defects, they try to focus only on bug counts rather than questioning the requirements and examining all kinds of edge cases. They may report the same bugs several times, which also irritates the developers and wastes time. Testers are less likely to spend time coaching other testers, self-improvement activities, etc. Also, it affects their psychology in a bad way. For example, in team A, if developers wrote unit tests that have %99 coverage and ran main business flow tests before the testing phase, the test environment, data, network, etc. are very stable. In these situations, tester X may not find too many defects.

On the other hand, in team B, if developers do not have the necessary things to do before the testing phase, tester Y may find too many defects. In these conditions, if you assess tester X and tester Y with the bug counts, this will be very unfair. You need to understand the reasons for the bugs; you need to question them, not only consider the bug counts.

I agree with Bach on lessons 65 and 66. Assessing developers and testers with the bug counts leads to too many problems. You need to focus on the reasons for the bugs and improve your system, process, methodologies, strategies, plans, etc. There are several lessons related to bugs in the “Lessons Learned in Software Testing” book, it is worth reading it.

In 2004, Cem Kaner and Walter P. Bond published an article on metrics [6]. This article’s first section stated that some companies established metric programs to conform to the criteria established in the CMMi, and TMMi, models, and fewer succeeded. These metrics programs are very costly Fenton [7] estimates a cost of %4 of the development budget. Robert Austin [8] gave information about measurement distortions and dysfunctions. The main idea of that paper is if a company is managed by using the measurement results and that measurements (metrics) are inadequately validated, insufficiently understood, and not tightly linked to the attributes they are intended to measure. All of these actions cause measurement distortions. Kaner and Bond proposed a new approach: the use of multidimensional evaluation to obtain the measurement of an attribute of interest. They conclude their paper this way: “There are too many simplistic metrics that don’t capture the essence of whatever it is that they are supposed to measure. Too many uses of simplistic measures don’t even recognize what attributes are supposedly being measured. Starting with a detailed task analysis or attribute understudy might lead to more complex and qualitative metrics. Still, we believe it will also lead to more meaningful and useful data.”

In my opinion, in this way, you may get very useful data to help and improve the testers. But in practice, it takes too much time, and this will be understood as “micromanaging the details of the tester’s job” by the testers also, if the team practices an agile development framework, it will be much harder to follow this approach.

In another article [9], the below suggestions are provided:

- When using metrics, we should go beyond metrics and seek qualitative explanations or “stories” being told about metrics.

- Measure humans and their work in numbers, use metrics to gain more information and use them as clues to solve and uncover deeper issues.

- Do not use metrics to judge the work of a human, do not reward or punish an individual’s work. The programmer/Tester productivity metric is something that you need to avoid.

- Create an environment where metrics misuse can be minimized.

- Metrics are meant to help you think, not to think for you – Alberto Savio.

I also agree above suggestions, and from this point, I want to share some metrics with you. Metrics may be endless. You may need to use metrics based on your software development life cycle, development framework, company culture, goals, etc. I will present you with some software testing metrics below.

Number of User Stories (If you use Agile Scrum)

The number of stories in each sprint.

Number of Test Cases

Number of test cases per project/phase/product/tester/story etc. You can generate many metrics based on the test case numbers.

Test Case Execution Progress

It is a test case execution progress of a sprint, project, phase, or custom period.

- Passed Test Cases

- Failed Test Cases

- Blocked Test Cases

- In Progress Test Cases

- Retested Test Cases

- Postponed Test Cases

Test Tasks Distribution per Tester

It is a distribution of test tasks in your team. You can easily gather this information with a JQL query and pie chart graph using JIRA.

Test Tasks Status

You can monitor this metric per sprint, project, or a defined time period.

Test Tasks per Projects

It shows how many test tasks are in each project in a given period.

Test Case Writing Tasks Distribution per Tester

If you are a classical testing guy and writing strict test cases in your project, you can measure them per tester.

Test Case Writing Tasks Status

It is the status of test case writing tasks per a given period.

Test Case Writing Tasks per Projects

You can get how many test case writing tasks you have for each project in a given period of time.

Created Defects Distribution per Tester

It shows the created defects per tester in a given time interval

Defects Status

It shows the status of defects in a given time interval.

Defects Root Causes

It shows the root causes of the defects in a given time interval.

Defect Tasks Priority (Such as Minor – Major – Critical – Blocked)

It shows business priorities of defects.

Defect Tasks Severity (Such as Minor – Major – Critical – Blocked)

It shows system-level severities of defects.

Defects Environment Distribution (Such as Test – UAT – Staging – Live)

It shows the distribution of defects per environment.

Defects per Project

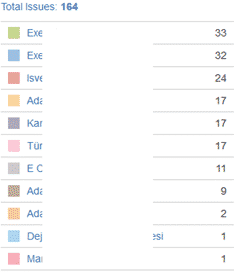

It shows the distribution of defects per project.

Resolved/Closed Defects Distribution per Developer

It shows the resolved defects distribution per developer.

Regression Defects Count

It shows how many regression defects you have in a given time period.

Resolved/Closed Defects Distribution per Project Hour

It shows how much the team spent on defects per project in a given time period.

Worklog Distribution of Testers per Tasks (Such as Test Execution, Test Case Writing, etc.)

Requirement Coverage

This metric shows how many requirements you covered with your test cases.

Number of Defects Found in Production

This metric shows defects found in production. It shows your development, system, network, etc. quality. You have to make a Pareto analysis to find and prioritize your major problems.

Cumulative Defect Graph

This graph shows the defects count cumulatively in a given time period.

Test Case Execution Activity

This shows the activity of your test case execution statuses in a given period.

Test Case Activity per Day

This metric shows how many test cases are created and updated per day.

Test Case Distribution per Tester

This shows how many test cases are written by each tester in a team.

Cost per Detected Defect

It is the test effort and bug count ratio in a given period, such as sprint.

Example: 4 test engineers performed two days of testing and found ten errors.

4 people * 2 days * 8 hours = 64 hours

64/10 = 6.4 Hours / Error

The test engineers spent 6.4 hours on an error.

I think this is a very low-level metric. It may be hard to measure too. We should focus on delivering valuable, defect-prone, high-quality, fast, secure, usable products.

Defects per Development Effort

This is the ratio between development effort and the defects in a given time period such as sprint, week, month, etc.

Example: 5 programmers have five days of software development activity. A total of 100 defects were found.

5 people * 5 days * 8 hours = 200 hours

200/100 = 2 hours

This shows that for 2 hours of effort, one defect arises.

Defect per User Story

These metric shows defect counts per user story.

Defect Fixation Time

This metric shows how much time developers spent time to fix the defects.

Defect Clustering

The report shows how many defects have been found in each module of our product in a given time period.

Example: 20% of the defects are found in the Job Search module, and 30% are in the Admin screens.

Successful Sprint Count Ratio

Successful Sprint Count Ratio = (Successful Sprint # / Total Sprint #) * 100

The sprint goal must be approved by the PO at the end of each sprint. All successful sprint statistics are collected, and the percentage of successful sprints is calculated. Some agile teams use this metric as KPI, but most agile enthusiasts are against this behavior.

Quality Ratio

Quality Ratio = (Successful Test Cases/ Total Test Cases) * 100

It is a metric based on the passed and failed rates of all tests run in a project according to the determined period. Please do not use this metric to assess an individual. Focus on problems and try to solve them. It has to lead you to ask questions. Using this metric as a KPI may cause problems between testers and developers in the software development life cycle and may damage the behavior of being transparent. Developers want the testers to open fewer defects, and testers may tend to open fewer defects to keep the “Quality Ratio” higher. Be careful about this metric.

Test Case Quality

Written test cases will be evaluated and scored according to the defined criteria. If it is impossible to examine all the test cases, they will be evaluated by sampling. Our goal should be to produce quality test case scenarios by paying attention to the defined criteria in all the tests we have written. You may use this metric as KPI.

Test Case Writing Criteria:

- Test cases must be written for fault finding.

- Application requirements must be understood correctly.

- Areas to be affected should be identified.

- The test data should be accurate and cover all possible situations.

- Success and also failure scenarios should be covered.

- The expected results should be written in the correct and clear format.

- Test & Requirement coverage must be fully established.

- When the same tests are repeated repeatedly, tests that consist of the same test cases no longer finds new defects. To overcome this paradox, test cases should be regularly reviewed, new tests should be written for the potential defects in the software and in different parts of the system.

- Each test scenario must cover a requirement.

- There should not be requirements or test scenarios written that “possibly,” “maybe,” or “exact” results should be given.

UAT Defect Leakage/Slippage Ratio

Defect Leakage = (Total Number of UAT Defects) / ((Total Number of Valid Test Defects) + (Total Number of UAT Defects))*100

UAT Defects: Requirements that have been coded, unit tests passed, test execution finished by test experts, and then the story is tested by POs in the UAT environment, and PO finds defects during the UAT process.

Both DEV and TEST teams are responsible for sending a faultless application to the UAT. It is expected that faults found during UAT will be lower than the number of valid faults found during the testing and development processes. If the UAT defects pass the TEST defects, we can say there is a significant problem in the development and testing phases. By Dividing the defects found in the UAT by the sum of the UAT + TEST defects and multiplying that result by 100, the UAT Defect Leakage was obtained. Some team uses this metric as a KPI for their testers and developers.

1) Valid test defects in the test environment= 211

2) Defects in the UAT environment= 13

3) UAT Leakage Ratio = (13/(211 + 13))*100 = %5,8

Note: This measurement is calculated one step further by multiplying each defect with its priorities and severities. With this method, defect scores are obtained by their priorities and severities.

Trivial: 0 Point, Minor: 1 Point, Major: 2 Point, Critical: 3 Point, Blocker: 4 Point

Defect Removal Efficiency Before Staging

Defect Removal Efficiency = (Total Number of (Test + UAT) Defects)/Total Number of (Test+ UAT + Staging) Defects) * 100

It shows the defects caught successfully before moving to the staging environment. Ideally, Test Defects> UAT Defects> Staging Defects. With this Metric, we will measure our achievement of defect detection and removal efficiency before staging environment testing.

For example, if 20 Tests, 10 UATs, and 5 Staging Defects are captured, our defect removal efficiency is calculated as follows: (30/35) * 100 = 85.7% Defect Removal Efficiency is caught. Some teams are using this metric as a KPI.

You can also measure your defect removal efficiency before Production.

Defect Resolution Success Ratio

Defect Resolution Success Ratio = (Total Number of Resolved Defects – Total Number Reopened Defects)/ Total Number of Resolved Defects) * 100

It is a KPI showing how many resolved defects are reopened. Ideally, if all defects do not reopen, 100% success is achieved in terms of resolution. If 3 out of 10 defects are reopened, the resolution success will be ((10-3)/10)*100 = 70%.

Conversely, subtracting this ratio from 100 will give us the “Defect Fix Rejection Ratio.”

Retest Ratio

Retest Ratio = (Total Count of Reopened Defects/(Total Number of Defects) * 100

It is the metric that shows how many times a defect is REOPEN and RETEST again. Each reopened defect will have to be retested, and this metric will show the efficiency rate we lost in retesting the resolved defects.

Total Count of Reopened Defects = Total Retest Test Number

For example, if none of the ten defects are Reopened:

(0 / (10)) * 100 = 0

0% Retest Rate indicates that we have not spent any effort on retesting, and we are very efficient in this metric.

For example, if ten defects are Reopened 30 times:

(30 / (10)) * 100 = 300

300% Retest Ratio indicates that 300% is our retest effort in all defects tests.

If we open ten defects and if all of them are returned to us:

(10 / (10)) *100 = 100%

100% Retest Ratio indicates that 100% is our retest effort in all defects tests.

Some organizations use this metric as KPI to assess development teams.

Rejected Defect Ratio

Rejected Defect Ratio = (Number of (Test + Staging) Rejected Defects/ Total Number of (Test + Staging) Defects) * 100

It is a metric that measures the status of faulty defects that a test engineer has opened. This metric will be able to use to measure defect efficiency. Too many rejections of defects indicate inefficiency and time loss in the development life cycle. Some organizations use this metric as KPI for their test teams.

Total Number of (Test + Staging) Defects: 217

Rejected (Test + Staging) Defects: 3

Rejected Defect Ratio = (3/217)*100 = %1,38

I did not take into consideration UAT defects. Because they are found by PO in the UAT environment. If you want, you can also add UAT defects to this measurement. But this time, you should not use this metric as a tester KPI.

Test Case Defect Density

Test Case Defect Density = (Number of Defects/ Total Number of Test Cases) * 100

It is the density of the defects from the running test scenario. For example, if 200 of 250 test cases are run and 20 have defects, the error density is (20/200) * 100 = 10%.

My comment on this metric/KPI: While this metric is a success for the development team, it can be interpreted as a failure for the test team or vice versa. If we use this metric in this way, we will create serious conflicts between development and testing teams. It would be more logical not to consider this metric KPI for development and test teams. This metric can vary with the size of development work, experiences of developers and testers, time pressure, lack of documentation, poor SDLC process, and so on. Too many variables connected to this metric. It is better not to use this as a KPI.

Also, you can use metrics to prepare your sprint test status reports. I will share with you a sample test status report field as follows. You can modify it as you want.

Sprint Status Report

- Risks

- Obstacles and Emergency Support Needed Problems

- Test Case Execution Status

- Test Case – Requirement Coverage

- Test Tasks Status

- UAT Status

- Defect Reports per Statuses/Types/Root Causes/Environments etc.

- Sprint Burn-Down Chart

- Sprint Progress Chart

Process adherence and improvement (KPI)

This can be a KPI for your team members. If a team member comes with unique and innovative ideas that lead you to perform your test efforts much faster with better quality, then you should congratulate and reward those team members. Make them happy and passionate.

To Obtain International Test Certification (KPI)

This can be a KPI for your testers. Especially if there are some junior testers in your team, you can set this goal for them to get international test certification. This will also improve their test knowledge. If your testers are experienced, then you should set advanced certification goals. However, if you believe in test philosophies such as RST (Rapid Software Testing), you should not spend time on this certification. This KPI depends on your software testing philosophy.

To Make a Presentation at Meetups or Conferences (KPI)

Making technical presentations will be a good goal for your testers. It improves their passion and vision for software testing. Also, they can widen their networks and be an ambassador for your company and team. At each meetup or conference, they learn new things, and they can share their knowledge. You should support your testers to promote their selves and your team, share their knowledge, and become international software testers.

Complete Online Training Programs (KPI)

There are several online learning websites available. Thus, you can assign related courses to your team members and monitor them to finish those assigned courses. In this way, you can improve your team’s skills and competencies.

Soft Skill KPIs

There are a lot of soft skill KPIs that you set as a goal for your team, such as:

- Initiative and Dynamism

- Problem Solving

- Teamwork

- Adaptability

- Flexibility

- Vision

- Creativity

- Technical Competence

- And so on…

Also, about [1], an agile tester has to have the following principles:

- Provide continuous feedback (Proactive)

- Deliver value to the customer (Result oriented)

- Enable face-to-face communication (communicative)

- Have Courage (Brave)

- Keep it Simple (Lean)

- Practice Continuous Improvement (Visionary)

- Respond to Change (Flexible)

- Self-Organize (Motivated)

- Focus on People (Synergistic)

- Enjoy (Humoristic)

Also, at SeleniumCamp-2017, Alper Mermer talked about software testing metrics, and he listed those metrics as follows:

- Production Monitoring and Metrics

- Performance Measurement

- Security Warnings

- Code Quality Metrics (Static Code Analysis Metrics)

- Test Coverage Metrics

- API + GUI Test Metrics

- Defects by Priority and Severity

- Production Bugs / Incidents

- Build Failures and so on…

As you have seen and written in this article, metrics are limitless. Using some of them as a KPI may be dangerous. You can use them to see the status of your situation and try to question your system, methodology, and deficiencies, for continuous improvement. Using them as a rewarding or punishing tool will create enormous pressure and stress on your team. Try to use them correctly and effectively based on your beliefs, philosophy, organizational culture, etc., but whatever you do, please be Fair! Seek first to understand, then act! Empathize! Create Trust and Love! Help your team! Believe in them! These are my advice to be a “good” maestro.

You can also read my Q&A on Agile Testing Mindset article here: https:/agile-testing/

It is a long article, but I hope you enjoyed it. Happy Testing! :)

Onur Baskirt

References

[1] A Practical Guide for Testers and Agile Teams – Lisa Crispin, Janet Gregory, Addison-Wesley Signature Series

[2] Software Testing Tips Experiences & Realities – Baris Sarialioglu, Keytorc Inspiring Series

[3] http://www.satisfice.com/blog/archives/category/metrics

[4] Lessons Learned in Software Testing – Cem Kaner, James Bach, Bret Pettichord

[5] Mobile Testing Tips Experiences & Realities – Baris Sarialioglu, Keytorc Inspiring Series

[6] http://www.kaner.com/pdfs/metrics2004.pdf

[7] N. E. Fenton, “Software Metrics: Successes, Failures & New Directions,” presented at ASM 99: Applications of Software Measurement, San Jose, CA, 1999.

[8] R. D. Austin, Measuring and Managing Performance in Organizations. New York: Dorset House Publishing, 1996

[9] https://conference.eurostarsoftwaretesting.com/wp-content/uploads/w13-1.pdf

Onur Baskirt is a Software Engineering Leader with international experience in world-class companies. Now, he is a Software Engineering Lead at Emirates Airlines in Dubai.

It is a well-written article with writer’s accurate observations and gleanings from different sources.

I like the approach of focusing on [solution;] appling the well known development and test practices before KPI measurements.

Thank you so much Erdem. Your kind comments are very valuable for me. I am happy that you liked the content and the approach.

Excellent article. Very insightful!

Thank you Saunak.

I have used many of these metrics during my professional career. Never have I seen them all in one place. Excellent Job !

Thank you Joe.

@Onur Hello Onur, thank you for the article. It is very helpful. Onur – in my company we are using Jira and integrated TestRail. I have been trying to create different types of matrix but did not succeed yet. Juts wondering, are you providing the consulting services?

Hi Irina, thank you for your kind comments. Yes, I can help you as much as I can. I will send you an email from my personal email address.

@Onur, thank you for the wonderful document, we have been using some of these KPIs for waterfall model and were successful in achieving the goal, now that we are agile I need to modify some of these KPIs to better suit this environment. So some of the KPIs that you have defined are helpful but need some guidance.

Hi @John, thank you very much. You are right. We should modify KPIs and metrics based on our companies and their cultures. However, we have to stick with the core principles while modifying them. Thanks for the feedback.

really helpfull, ty!

welcome :)

could you share more about the key success percentage for each metric? For example: Quality Ratio of 1 sprint should be 100%

It depends on you. You can try to achieve the best or reach it progressively. I cannot share a magic number or percentage here. Let’s assume in Sprint 1, your Quality ratio is %20. This is a bad sign and question mark; on the contrary, let’s assume it is %100 again, it is a question mark, and you should dig in to understand the details if you get very high and very low numbers. I can suggest this. Ideally, if you have a big project and you start to test execution, it is very typical and expected that the tests should find some problems (bugs). If not, you should understand the reasons, maybe tests are not powerful enough to cover all scenarios cases, or maybe the code is implemented very well, and unit tests have %100 coverage. This indicates that the team did everything very well before the test phase. Use these metrics as an indicator, not a performance penalty or reward for the team. When you identify good or bad parts in your process, you will take an action afterwards.